Table of Contents

ToggleIntroduction:

Real-time vision AI models have been quietly changing the world, and most people had no idea about it until recently. We used to think machines could never truly see. Not really. They could record. They could store. But understanding what they were looking at? Responding to it in real time? That felt like science fiction.

Not anymore.

Today, a system can spot early-stage cancer in a medical scan within seconds, often before a specialist has even had a chance to sit down and review it. A drone may monitor an entire city after a flood and understand the damage in under five minutes. A satellite can detect illegal deforestation the moment it begins. They are happening right now, in 2026, in hospitals, farms, and cities around the world.

In this guide, you will learn exactly what real-time vision AI models are, why they matter so much, and how they are changing everything, from healthcare to climate monitoring. Let’s explore together.

What Are Real-Time Vision AI Models?

Real-time vision AI models are AI systems trained to analyze visual data, photos, videos, satellite imagery, or drone footage, and instantly deliver useful, actionable results. Unlike a regular camera that just records, these systems see, understand, and respond.

They work using deep learning, a type of AI that learns from huge amounts of data. The systems use tools like convolutional neural networks (CNNs) and vision transformers. These can look at an image and not only spot what is in it, but also understand what it means and predict what might happen next.

From Simple Detection to Intelligent Understanding

Vision AI has come a long way. It started by doing basic tasks, like spotting a seatbelt or flagging an intruder on a security camera. Now, according to Gartner, these systems can understand complex situations, predict what is about to happen, and handle high-stakes decisions in real time.

The global computer vision market was worth $19.82 billion in 2024. By 2030, it is expected to reach $58.29 billion, growing at 19.8% yearly (Grand View Research). But the real story is not the money. It is what these systems can now actually do. Models like IBM’s TerraMind, released in 2025 with the European Space Agency, can now connect visual data with language, geography, and time, creating a whole new kind of environmental intelligence.

Edge AI and On-Device Vision

One of the biggest recent breakthroughs is edge AI. This means vision AI can now run directly on local devices, such as cameras, drones, or sensors, without needing to send data to a faraway server.

This matters a lot. It means real-time decisions happen right where they are needed, on a farm, in a factory, or in a disaster zone. For places with slow or no internet, this is a game-changer. Real-time vision AI is no longer just for big tech companies. It is becoming available to everyone, everywhere, and that is what makes it a true gift to all of humanity.

Why Real-Time Vision AI Models Are Truly a Great Gift to Humanity

Not every technology deserves to be called life-changing. But real-time vision AI genuinely earns that title. It is now powering real-time automation, making operations more efficient, and helping people make smarter decisions in industries that touch every part of our lives, from the food we eat to the air we breathe to the disasters we survive.

The Remarkable Growth of Vision AI in 2026

The Roboflow Vision AI Trends 2026 Report, which looked at over 200,000 real-world projects, confirms that 2025 was the turning point. Vision AI moved from a research experiment to become a standard tool used by major companies worldwide. Visual data is now as easy to work with as written text. The physical world is becoming something we can read, search, and act on, at scale. The question is no longer whether vision AI matters. The question is whether you are ready to use it wisely.

8 Powerful Ways Real-Time Vision AI Models Are Changing Human Life

The impact of real-time vision AI is not theoretical. It is already here, already working, and already saving lives. Here are eight areas where it is changing everything.

1. Saving Lives in Healthcare: Faster, Smarter Diagnosis

Perhaps the most immediately life-saving use of real-time vision AI is in medicine. AI systems now analyze X-rays, CT scans, and MRIs to detect cancer, eye disease, and brain conditions, often as accurately as a specialist doctor. Hospitals using these systems are reporting faster results and better outcomes because problems are caught earlier.

Think about what this means for a patient in a rural clinic with no specialist nearby. A real-time AI system can analyze its scan and flag something unusual within minutes, giving the doctor the information needed to act before it is too late. In much of the world, this is not a luxury. It is the only chance. Understanding how

Risk analytics and decision intelligence tools for health and environmental systems show just how wide-reaching this transformation already is.

2. Feeding the World; Vision AI in Smart, Sustainable Farming

Hunger and food insecurity are two of the biggest problems humanity faces. Real-time vision AI is proving to be a powerful solution. In 2025, farms using computer vision achieved up to 50% faster crop disease detection and used 40% less pesticide, making farming more sustainable and efficient.

Drones and field cameras scan crops all the time. Deep learning models study the images to catch early signs of disease, color changes, unusual textures, abnormal growth, long before a human eye would notice. Farmers get targeted alerts and can treat only the affected area instead of spraying chemicals everywhere. Livestock systems also track animal behavior to spot illness early, reducing animal deaths by up to 30% where these systems are fully used.

The smart agriculture technology shaping the future of food production is built on exactly this kind of real-time visual intelligence. Furthermore, clean energy solutions aligned with precision agriculture make sustainable farming even more powerful when both are used together.

3. Responding to Disasters Faster: Vision AI in Emergency Management

When disaster strikes, a flood, wildfire, earthquake, or cyclone, every minute matters. Real-time vision AI is changing how emergency teams assess damage, plan evacuations, and send help. AI-equipped drones can remotely check a disaster zone in as little as four minutes, something that used to take days of on-the-ground surveys.

During the Los Angeles wildfires, AI-driven predictive modeling helped assess fire paths, optimize evacuation routes, and get medical teams to the right places fast. In Africa, AI models now predict locust swarms that threaten food for millions of people, analyzing wind patterns, soil moisture, and plant growth to forecast where they will move next, so communities can prepare in advance.

Explore how climate resilience solutions and genuine survival strategies are being strengthened by vision AI capabilities that give disaster-affected communities their best chance of fast recovery.

4. Watching Over Our Planet: Vision AI for Environmental Protection

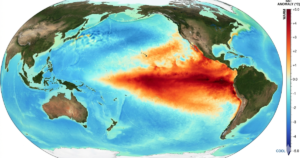

Real-time vision AI is not just watching over people; it is watching over the planet, too. Satellite images processed by deep learning models can detect deforestation within hours, track glaciers melting, map ocean pollution, and monitor biodiversity across millions of hectares, all at the same time.

IBM’s TerraMind model, released in 2025 with the European Space Agency, introduced a new way for AI to study environmental change across many different types of data at once. OroraTech’s system, using NVIDIA Jetson technology on small satellites, can detect wildfires within 60 seconds of them starting, giving first responders real-time data when every second counts.

Remote sensing and satellite image analytics powered by vision AI let organizations track ecosystem health at a scale that was simply impossible before. Similarly, geospatial data production services informed by real-time imagery are helping governments and NGOs monitor deforestation, water loss, and land damage as they happen. The 10 most powerful remote sensing techniques changing environmental management today are all being made faster and more accurate by real-time vision AI.

5. Making Cities Smarter and Safer, Urban Vision AI at Work

Smart cities are becoming real, and vision AI is a big reason why. Traffic systems use visual data to manage signal timing better, cutting urban congestion by up to 20% in tested cities. Safety systems watch public spaces for unusual incidents. Parking systems can accurately track occupancy 82% to 97% of the time, even in poor lighting.

On construction sites and factory floors, worker safety systems check in real time whether people are wearing helmets, harnesses, and safety vests, and immediately alert supervisors if something is missing. These systems have significantly reduced workplace accidents when they are fully in use. Privacy protections are also built in; faces and license plates can be blurred automatically before any footage is stored, so safety does not come at the cost of people’s privacy.

6. Better Manufacturing

Human inspectors get tired. They miss things. They only work during certain hours. Real-time vision AI never gets tired and never misses a beat. Computer vision systems in manufacturing have gone from basic quality checking to 3D real-time visual inspection that can spot defects smaller than a fraction of a millimeter.

Even a vision AI model with 50% accuracy in an industrial setting can save millions of dollars by catching defects that previously went completely unnoticed. As accuracy continues to improve toward and beyond human levels, the impact keeps growing. Vision AI is now critical infrastructure for any manufacturer that wants to compete on quality, safety, and efficiency.

7. From Space to Earth, Satellite Vision AI for Global Intelligence

Over 10,000 active satellites are orbiting Earth right now in 2026. Each one sends back a constant stream of visual data. Without AI, most of that data would be impossible to analyze fast enough to be useful. With vision AI, it becomes one of the most powerful tools humanity has ever had for understanding and protecting our planet.

IBM’s Prithvi-EO-2.0, built with NASA, uses vision transformers trained on multispectral satellite images to support farming monitoring, disaster response, and environmental tracking all at once. Studies show that multispectral vision AI models perform up to 25% better than single-band models on tasks like crop mapping and wildfire burn scar detection — a real difference that leads to faster, more accurate decisions on a global scale.

GIS mapping services that enable smarter spatial decisions and spatial databases with Web GIS capabilities are the platforms through which satellite vision AI data becomes useful intelligence for planners, conservationists, and emergency teams around the world.

8. Vision AI Meets GIS, Where Data Becomes Real Decisions

One of the most underappreciated uses of real-time vision AI is how it works together with geographic information systems (GIS). When visual intelligence from satellites and drones is placed on geographic maps, completely new kinds of insight appear. Decision-makers can see not just what is happening, but where it is happening, how fast it is changing, and what needs to be done.

GIS-powered decision support and applied spatial intelligence solutions use real-time vision AI data to support urban planning, disaster risk reduction, infrastructure development, and environmental care all at once. Organizations that are serious about environmental sustainability and ESG performance consulting are increasingly embedding vision AI and GIS intelligence into their core decision-making, because it turns passive watching into active, evidence-based care for communities and ecosystems.

The Important Ethical Questions We Must Face

These questions are not comfortable. But they are necessary. Because a technology this powerful has to work for every human being on Earth, not just some of them.

Bias, Fairness, and Building Vision AI That Works for Everyone

Real-time vision AI models are only as good as the data they learn from. When training data lacks diversity in skin tones, body types, locations, or languages, the resulting systems work poorly for people who are not well represented. This is not just a theory. It has caused real, documented harm in healthcare screening, hiring tools, and criminal justice systems.

The solution requires deliberate action: diverse training data, careful testing across different groups, ongoing monitoring after launch, and genuine accountability. Building vision AI that works fairly for everyone is not optional; it is the basic ethical requirement for any organization deploying these systems at scale.

Privacy, Consent, and the Limits of Visual Surveillance

Real-time video analysis creates real privacy risks. By 2026, Europe’s GDPR and Digital Services Act will enforce stricter rules on how visual data is used and how AI decisions are explained. Laws across the US and around the world are following quickly. The most responsible vision AI systems are built with privacy protection from the start, automatically blurring sensitive identifiers before storage, as a default setting rather than an afterthought.

The lesson is clear: the extraordinary power of vision AI demands proportionate ethical responsibility. Organizations that build trust through transparent, privacy-respecting systems will be the ones that earn the right to deploy this technology at the scale its potential demands.

What the Future of Real-Time Vision AI Looks Like

The future is arriving faster than most people expect. Multimodal vision AI systems, which can process images, text, audio, and sensor data all at the same time, are becoming the new standard. They will not just detect objects. They will understand complex scenes, predict outcomes, and explain their thinking in plain language.

In April 2026, Sony AI published a landmark paper in Nature showing the first AI system that could compete with elite human table tennis players, demonstrating real-time visual sensing and physical control at a level never seen before. The bigger implications for surgical robots, autonomous safety systems, and humanitarian rescue operations are enormous.

Predictive modeling and AI development capabilities are expanding to connect vision AI with climate forecasting, infrastructure monitoring, and social risk analysis, giving organizations a complete intelligence layer over their physical environments. The AI and machine learning services ecosystem supporting these deployments is becoming the backbone of how forward-thinking governments, businesses, and conservation organizations make their most important decisions.

Explore the latest insights on AI, GIS, and climate intelligence to stay at the very edge of where this technology is heading, and discover how organizations across sectors are already putting it to work.

Conclusion

Real-time vision AI fashions are, without a doubt, great tools that the generation has gifted us. They’re making a real distinction in hospitals in which locating specialists may be hard. They may be protecting plants in fields wherein one awful season means starvation. They are guiding rescue teams through catastrophe zones in which each minute counts. They’re watching over ecosystems that can not communicate for themselves.

However, like each powerful gift, their fee depends completely on how we use them. With responsible improvement, ethical governance, inclusive layout, and an authentic commitment to using this power within the provider of people, no longer at their expense, vision AI can live up to its breathtaking potential.

Machines can now see our world with awesome readability. It’s as much as us to make sure they help us protect it, take care of it, and share its benefits with anyone.

Start exploring how AI-driven geospatial intelligence and climate solutions are already making that vision real, and consider how your organization can be part of this extraordinary moment in human history.

FAQs

What are real-time vision AI models?

Real-time vision AI models are AI systems that look at visible records, photographs, motion pictures, satellite photos, or drone photos, and instantly give you useful effects. They use deep learning knowledge of equipment like convolutional neural networks (CNNs) and imaginative and prescient transformers to spot items, apprehend patterns, apprehend scenes, and expect what would possibly occur next.

How are real-time vision AI models used in healthcare?

In healthcare, these structures analyze medical pics, X-rays, CT scans, and MRIs to detect diseases like cancer, diabetic eye disease, and mental health situations. They regularly match or exceed professional doctors in accuracy. They may be extensively utilized for surgical guidance, patient monitoring, workforce safety in clinical settings, and remote diagnostic aid in regions where experts aren’t available.

What is the global market size of computer vision AI in 2026?

The global computer vision market was worth $19.82 billion in 2024 and is expected to reach $58.29 billion by 2030, growing at 19.8% per year (Grand View Research). This growth is driven by healthcare, self-driving vehicles, precision farming, smart manufacturing, and environmental monitoring.

How does vision AI support disaster response and emergency management?

Vision AI-equipped drones can check disaster damage across wide areas in just minutes, spot survivors in hard-to-reach places, map damaged infrastructure, and help plan the best evacuation routes. AI-powered satellite systems can detect wildfires within 60 seconds of ignition. All of this helps emergency teams make faster, more accurate, and more efficient life-saving decisions.

What is edge AI in computer vision, and why does it matter?

Edge AI means running vision AI directly on a local device, a camera, drone, sensor, or smartphone, instead of sending data to a distant server. This makes decisions much faster, costs less, protects privacy better, and works in places with little or no internet. This is especially important for farming, industrial, and humanitarian work in remote or resource-limited areas.

How does real-time vision AI support environmental sustainability?

Real-time vision AI processes satellite and drone images to monitor deforestation, track melting glaciers, detect ocean pollution, map biodiversity loss, and assess wildfire spread, all in near real time. When connected with GIS platforms, this visual intelligence helps organizations make data-driven environmental decisions at a speed and scale that was simply not possible before.

What are the main ethical risks of real-time vision AI?

The main concerns are: AI bias (systems working less accurately for underrepresented groups), mass surveillance and civil liberties risks, data privacy violations, and a lack of transparency about how AI makes visual decisions. Responsible development practices, diverse training data, privacy-by-design frameworks, independent auditing, and clear governance are essential for deploying vision AI ethically.